8 million videos are too much for YouTube to delete but that’s what YouTube has done in quarter 4 of 2017. Almost all the videos were pinpointed by its own moderator, AI. According to growth the inside report, almost 76% of 6.7 million videos flagged by AI were not even viewed once.

As the report suggests, most the videos were either an attempt to upload for the first time or uploading a porn, which is a violation of the YouTube terms. However, such videos hold so much space on YouTube servers and therefore purging looked necessary.

Recently YouTube has employed a machine learning or artificial intelligence to identify and flag content that is not in line with YouTube policies and is inappropriate. Ever since June last year, the number of improper video content has gone up on YouTube. YouTube has confirmed that the introduction of AI has helped recognize and delete such content quickly.

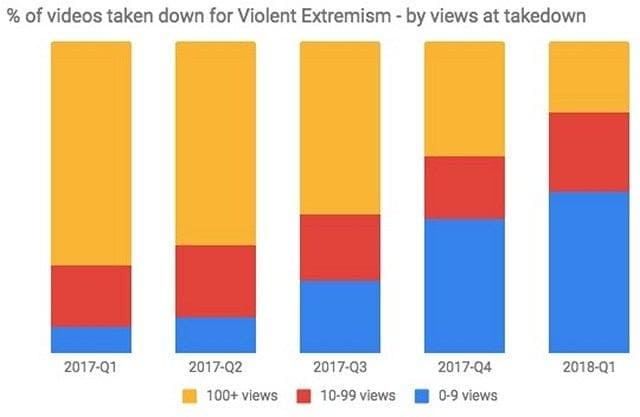

Many of the videos removed were containing violence extremism and were flagged manually, which were not even viewed for 10 times. “Our advances in machine learning enabled us to take down nearly 70 percent of violent extremism content within 8 hours of upload and nearly half of it in 2 hours”, the report said.

This is one of its kind internal report that YouTube has released under the name of Community Guidelines Enforcement Report, which records detailed measures taken by the YouTube and Google in order to provide clean and useful content to the viewers. The appropriation of content plays a huge part in online platforms being used to spread the violence and hatred against a particular community or nation.

Therefore, it is mandatory for such a huge platform like YouTube to create a neat video content platform that offers level playing ground to all. To this effort, YouTube devoted 10000 employees to sort the videos and remove content that was disturbing.

Besides, YouTube has joined the hands with many NGOs and govt agencies to enforce the rules and spread awareness over posting violent content.

Leave a Reply